Accelerate Your SaaS: Best Practices for Fast Product Experiments

In the volatile world of early-stage SaaS, speed is not just an advantage-it’s a necessity. The cost of building unwanted features is too high, and the market waits for no one. This guide will explore why fast product experiments are indispensable for reducing risk, accelerating learning, and achieving product-market fit in record time. By embracing rapid validation, early-stage companies can make smarter, faster, and more user-aligned product decisions, ensuring every development effort contributes to meaningful growth.

The Imperative of Speed: Why Early-Stage SaaS Needs Fast Experiments

Early-stage SaaS companies face immense pressure to prove their value and achieve product-market fit quickly. Traditional, lengthy development cycles are a recipe for failure, leading to wasted resources and missed opportunities. Fast product experiments offer a dynamic alternative, allowing teams to validate assumptions and gather critical user feedback with unprecedented speed. This approach drastically minimizes the financial and developmental risks associated with building features that users ultimately don’t need or want.

Minimizing Risk & Uncertainty

One of the biggest challenges in early-stage SaaS is the inherent uncertainty. Will users adopt this feature? Does this solution truly address their pain points? Fast experiments are designed to answer these questions rapidly. By testing small, focused hypotheses, companies can quickly validate or invalidate ideas before investing significant resources. This proactive approach allows teams to discover how Watobu’s platform can help streamline your feature discovery process, reducing initial guesswork and pointing towards high-impact areas for testing. This directly translates to reduced development waste and a more efficient allocation of precious startup resources.

Rapid Learning & Iteration

The ability to rapidly test, learn, and adapt is paramount. Fast experiments enable continuous iteration, transforming assumptions into validated knowledge. This agile approach helps teams quickly pivot away from non-viable ideas and double down on what truly resonates with users, preventing significant investment in features nobody wants. The faster you learn what works and what doesn’t, the quicker you can refine your product offering.

Achieving Product-Market Fit Faster

Ultimately, the goal of any early-stage SaaS is to find a strong product-market fit. Fast experiments act as a compass, guiding product development towards solutions that genuinely solve user problems and create significant value. By continuously validating feature ideas and user experiences, companies can achieve this critical milestone much faster. Explore strategies for accelerating your path to product-market fit to understand how rapid experimentation can be your most powerful tool.

What Defines a "Fast" Product Experiment?

Before diving into the ‘how,’ it’s essential to understand what constitutes a ‘fast’ product experiment. This section defines the core characteristics that differentiate rapid experimentation from traditional product development processes, highlighting its lean, focused, and iterative nature. It’s not just about speed, but about structured, intelligent velocity.

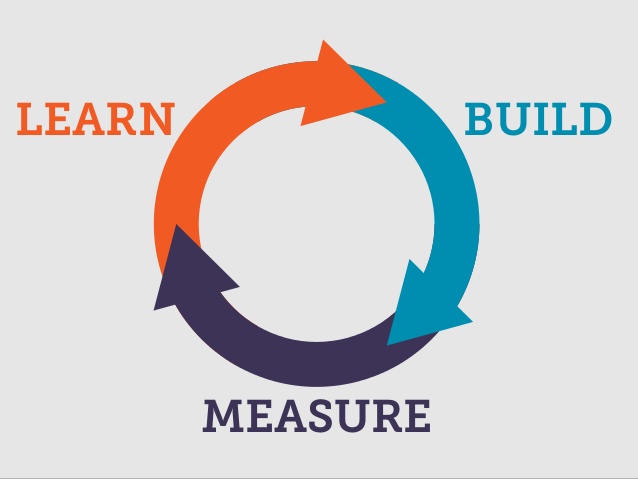

Lean Methodology Principles

Fast experiments are deeply rooted in lean methodology, prioritizing learning over extensive planning. This means starting with a clear hypothesis, designing the smallest possible test to validate it, and iterating based on the results. It’s about ‘build-measure-learn’ on repeat, ensuring that every effort contributes to actionable knowledge rather than just feature development. The emphasis is on efficiency and maximizing insights from minimal investment.

Focused Hypotheses & Clear Metrics

Unlike vague exploratory research, fast experiments are driven by specific, testable hypotheses. Each experiment aims to answer a precise question, with success or failure measured against clearly defined, quantifiable metrics. This focus ensures that every experiment yields concrete, actionable insights, avoiding ambiguous results that lead to further uncertainty.

Short Cycles & Actionable Insights

A ‘fast’ experiment typically runs for a short duration – days or a couple of weeks at most. The emphasis is on quickly gathering enough data to make an informed decision, rather than achieving statistical significance over a long period. The insights gained must be immediately actionable, guiding the next development sprint or strategic pivot. Rapid feedback loops are critical for maintaining momentum.

Core Principles for Designing Effective Experiments

Effective fast experiments aren’t just about speed; they’re about smart design. This section outlines the foundational principles that guide the creation of experiments that yield meaningful, actionable insights, ensuring your team learns efficiently and avoids common pitfalls.

Start with a Clear Hypothesis

Every experiment begins with a clear, falsifiable hypothesis. Instead of saying ‘users will like this feature,’ formulate it as ‘We believe adding X feature will lead to a Y% increase in Z metric for users of [specific persona]. We’ll know we’re right if [observable outcome].’ This specificity ensures that your experiment has a defined purpose and clear success criteria.

Define Measurable Success Metrics

Before launching, define what success or failure looks like. What metrics will you track (e.g., conversion rate, engagement, time on page)? How much change in these metrics will signify a meaningful outcome? Having clear targets prevents ambiguity and ensures results are interpretable, allowing for confident decision-making.

Isolate Variables & Control Groups

To accurately attribute changes to your experiment, isolate the variable you’re testing. Use control groups where possible (e.g., in A/B tests) to compare against a baseline. This ensures that observed changes are due to your experiment, not external factors, providing reliable data for your conclusions.

Prioritize Learnings Over Perfection

The goal of a fast experiment is learning, not shipping a perfect feature. Embrace imperfection in your test setup to get a quick read. Focus on gathering just enough data to inform your next step, whether that’s refining the feature, abandoning it, or exploring a new direction. This mindset prevents analysis paralysis and encourages swift action.

A Step-by-Step Guide to Running Your Experiments

Moving from theory to practice, this section provides a sequential guide to successfully executing fast product experiments. From identifying the core problem to acting on insights, these steps form a robust framework for rapid product validation and iteration.

Identify the Problem/Opportunity

Begin by clearly identifying the biggest unknown or the most critical assumption about your product or user behavior. Utilize tools like Watobu to discover notable features from successful SaaS products, inspiring hypotheses based on proven patterns rather than pure guesswork. This reduces initial uncertainty and points towards high-impact areas for testing, leveraging lessons already embedded in successful real-world SaaS.

Formulate Testable Hypotheses

Translate your identified problem or opportunity into a testable hypothesis. For example, ‘We believe that adding an in-app tutorial for feature X (inspired by a similar successful feature on Watobu) will increase its adoption by 15% among new users.’ A well-defined hypothesis is the cornerstone of a successful experiment.

Choose the Right Experiment Type

Select the most appropriate experiment type to test your hypothesis: A/B tests for UI/UX variations, usability tests for workflows, concierge MVPs for direct user interaction, or ‘fake door’ tests for demand validation. Each has its strengths for rapid learning, and choosing wisely ensures maximum insight for minimal effort.

Build/Test Minimum Viable Features

Create the minimal viable version of the feature or change required for the experiment. This could be a simple landing page, a clickable prototype, or a basic functional element. The goal is to build just enough to test your hypothesis without over-investing in development that might be discarded.

Collect & Analyze Data Quickly

Implement robust tracking and analytics from the start. Monitor key metrics in real-time or near real-time. Don’t wait for perfect data; collect enough to draw a conclusion within your short experiment window. Prioritize clear signals over exhaustive analysis to maintain speed.

Act on Insights: Iterate or Pivot Act on Insights: Iterate or Pivot

Based on your data, make a clear decision: iterate on the feature, pivot to a new approach, or kill the idea. Crucially, document your learnings. This continuous feedback loop is the essence of fast experimentation, enabling smarter, faster, and more user-focused product decisions. By doing this, you’ll be reducing product uncertainty and building user-aligned products through this iterative process.

Tools to Supercharge Your Experimentation

Leveraging the right tools can significantly enhance the speed and accuracy of your product experiments. This section highlights essential categories of tools that empower early-stage SaaS teams to design, run, and analyze experiments efficiently, turning data into actionable insights.

A/B Testing Platforms

Platforms like Optimizely, VWO, or Google Optimize enable easy setup and management of A/B, multivariate, and split URL tests. They provide statistical analysis to determine the significance of your results, taking guesswork out of UI/UX decisions and allowing for rapid, data-driven optimization.

Analytics & User Behavior Tracking

Tools such as Google Analytics, Mixpanel, Amplitude, and Hotjar offer deep insights into user behavior. They help track conversions, engagement, user journeys, and identify pain points, providing the quantitative and qualitative data necessary to measure experiment success and understand the ‘why’ behind the numbers.

Prototyping & Mockup Tools

For quick validation without extensive coding, tools like Figma, Sketch, Adobe XD, or Balsamiq allow teams to create interactive prototypes and mockups. These can be used for usability testing or ‘fake door’ experiments to gauge interest before significant development effort, saving time and resources.

User Feedback & Survey Platforms

Platforms like Typeform, SurveyMonkey, or UserTesting are invaluable for gathering direct feedback. They allow for quick surveys, polls, and remote user interviews, providing qualitative insights that complement quantitative data and deepen understanding of user needs and preferences.

Navigating Challenges: Avoiding Common Experimentation Pitfalls

While fast experimentation offers immense benefits, it’s not without its challenges. This section addresses common mistakes early-stage SaaS teams make and provides strategies to avoid them, ensuring your experiments truly drive progress and deliver meaningful value.

Lack of Clear Goals

Without clearly defined goals, experiments can become unfocused and yield ambiguous results. Ensure every experiment is tied to a specific question or KPI, avoiding the trap of ‘testing for testing’s sake.’ A well-defined objective acts as a compass, guiding your efforts and making results interpretable.

Over-engineering Experiments

Resist the urge to build a perfect, fully-fledged feature for an experiment. Over-engineering delays the learning process and defeats the purpose of ‘fast.’ Embrace MVPs and prototypes to get feedback quickly, remembering that the goal is to learn, not to ship a polished product from day one.

Ignoring Negative Results

Negative results are still results! They provide valuable information on what doesn’t work, saving future development effort. Don’t shy away from experiments that fail to validate your hypothesis; learn from them and iterate. These ‘failures’ are crucial for steering your product in the right direction.

Data Overload Without Action

Collecting vast amounts of data without a clear plan for analysis and action is a common pitfall. Focus on key metrics, analyze them promptly, and ensure there’s a defined next step for every experiment, whether it’s building, iterating, or discarding. Data is only valuable when it leads to informed decisions.

Conclusion: Build Smarter, Ship Faster

Embracing fast product experimentation is not just a methodology; it’s a mindset that empowers early-stage SaaS companies to navigate uncertainty, validate ideas, and build products that truly resonate with users. By applying these best practices, teams can significantly reduce waste, accelerate learning, and make smarter, faster, and more user-aligned product decisions. Leveraging platforms like Watobu further enhances this process by providing access to a curated catalogue of real-world SaaS features, reducing guesswork and inspiring effective experiments from the start. Start experimenting today and transform your product development journey.

Frequently Asked Questions (FAQ)

What exactly is a 'fast product experiment'?

A fast product experiment is a rapid, focused test designed to validate or invalidate a hypothesis about a product feature or user behavior, typically completed within a short timeframe (days to weeks) to generate quick, actionable insights.

Why are fast experiments so crucial for early-stage SaaS companies?

Fast experiments are crucial for early-stage SaaS to quickly achieve product-market fit, minimize wasted resources, rapidly iterate based on real user feedback, and adapt to market changes without extensive upfront investment.

How do you define success in a fast product experiment?

An experiment is successful if it provides clear, actionable insights that allow you to make an informed decision – whether that’s validating a feature, disproving a hypothesis, or identifying a new direction. Success is defined by learning, not necessarily by immediate positive results.

Can you give some examples of fast product experiments?

Examples include A/B testing different call-to-action buttons, running a concierge MVP to manually deliver core value, conducting rapid usability tests on a new UI flow, or using a ‘fake door’ test to gauge demand for a non-existent feature.

How can a feature research platform aid in running fast product experiments?

Platforms like Watobu streamline feature discovery by providing curated examples of successful SaaS features, which can inspire hypotheses for experiments, reduce guesswork, and help teams design more user-aligned tests from the outset.